Abstract

This article examines the gendered design of Amazon Alexa’s voice-driven

capabilities, or, “skills,” in order to better understand how Alexa, as an AI

assistant, mirrors traditionally feminized labour and sociocultural expectations.

While Alexa’s code is closed source — meaning that the code is not available to be

viewed, copied, or edited — certain features of the code architecture may be

identified through methods akin to reverse engineering and black box testing. This

article will examine what is available of Alexa’s code — the official software

developer console through the Alexa Skills Kit, code samples and snippets of official

Amazon-developed skills on Github, and the code of an unofficial, third-party

user-developed skill on Github — in order to demonstrate that Alexa is designed to be

female presenting, and that, as a consequence, expectations of gendered labour and

behaviour have been built into the code and user experiences of various Alexa skills.

In doing so, this article offers methods in critical code studies toward analyzing

code to which we do not have access. It also provides a better understanding of the

inherently gendered design of AI that is designated for care, assistance, and menial

labour, outlining ways in which these design choices may affect and influence user

behaviours.

30 years ago these sayings were cliché, today they are

offenisve [sic]. Demeaning, limiting, or belittling a woman’s contribution to a

household is not quaint or cute. Prolonging or promoting sexists tropes is

wrong. Maybe write a skill called Sexist Spouse. Please do better humans.

—customer review for the Amazon Alexa skill “Happy

Wife”

This article examines the gendered design of Amazon Alexa’s voice-driven

capabilities, or, “skills,” in order to better understand how Alexa, as an AI

assistant, mirrors traditionally feminized labour and sociocultural expectations.

While Alexa’s code is closed source — meaning that the code is not available to be

viewed, copied, or edited — certain features of the code architecture may be

identified through methods akin to reverse engineering and black box testing. This

article will examine what is available of Alexa’s code — the official software

developer console through the Alexa Skills Kit, code samples and snippets of official

Amazon-developed skills on Github, and the code of an unofficial, third-party

user-developed skill on Github — in order to demonstrate that Alexa is designed to be

female presenting, and that, as a consequence, expectations of gendered labour and

behaviour have been built into the code and user experiences of various Alexa skills.

In doing so, this article offers methods in critical code studies toward analyzing

code to which we do not have access. It also provides a better understanding of the

inherently gendered design of AI that is designated for care, assistance, and menial

labour, outlining ways in which these design choices may affect and influence user

behaviours.

As commercialized AI devices become more and more sophisticated, we can expand

current research on the gendered design of AI to asking questions about the intent

behind such design choices. Of Alexa’s many official and unofficial skills, in this

article, I am most interested in the skills that mirror gendered forms of workplace,

domestic, and emotional labour and also the skills that permit Alexa to condone and

even reinforce misogynistic behaviour. The expectations to maintain order and

cleanliness in a workplace or home, as well as sociocultural expectations to be

emotionally giving — including by being maternal, by smiling, by cheering up others,

and by emotionally supporting others — may be considered stereotypically

“feminine.” By having numerous skills that perform these traits, Alexa serves

as a surrogate for sources of gendered labour that are dangerously collapsed and

interchangeable as mother, wife, girlfriend, secretary, personal assistant, and

domestic servant.

In my focus on the gendered design of Alexa’s skills and code, I am drawing upon the

growing body of salient work by scholars in science and technology studies, critical

data studies, critical race studies, computer science, feminist technoscience, and

other adjacent fields in which there has been a pointed critique of the systemic

biases of Big Tech’s data, algorithms, and infrastructures. Notable texts in these

efforts include Ruha Benjamin’s

Race after Technology

[

Benjamin 2019], Cathy O’Neil’s

Weapons of Math

Destruction

[

O'Neil 2016], Safiya Umoja Noble’s

Algorithms of

Oppression

[

Noble 2018], and Wendy Hui Kyong Chun’s

Discriminating Data

[

Chun 2021], and also cultural organizations and texts such as Joy

Boulamwini’s Algorithmic Justice League (established in 2016) and Shalini Kantayya’s

documentary

Coded Bias

[

Coded Bias 2020]. The methods of these works is to analyze Big Tech

culture from its self-presentation of objective data, information, and logic —

pillars that begin to crumble when we examine the exclusionary, discriminatory, and

systemically unequal foundations upon which they are built.

And these biases are not singular. Indeed, any analysis and discussion of Alexa’s

gendered design may be extended to the ways in which many AI assistants are modelled

after forms of labour that exploit groups of people on the basis of race, class, and

nationality. The intersectional systemic biases of technological design invite

further research on this topic in critical code and critical data studies in

particular.

In the code analysis of this article, I will:

- Analyze the Alexa Skills Kit software development kit for interface features

that are automated and parameterized.

- Analyze select code samples and snippets on Alexa’s Github account for code

features that are parameterized.

- Analyze official Alexa skills and available code samples and snippets to

demonstrate Alexa’s problematic responses to users’ flirting and verbal

abuse.

- Analyze cultural and code variations of the “make me a sandwich”

command to discuss how users try to trick Alexa into accepting overtly

misogynistic behaviour.

I add three notes for added context. First, I distinguish between official and

unofficial skills in this way: “official” skills are developed by Amazon’s own

Alexa division and “unofficial” skills are developed by a third-party person,

persons, or group not affiliated with this division. All but one skill that I discuss

are available on the Amazon store. Second, occasionally, source texts may describe

Amazon devices on which Alexa operates, such as the Amazon Echo and Echo Dot, and

devices that have a smart display, rather than the Alexa technology itself. Third,

all Alexa code snippets and samples discussed in this article, as well as

documentation of Alexa’s responses, are from mid 2022 and are subject to future

change. The hope is that they

would continue to change to

improve aspects of their current biased design, whether or not that is a realistic

objective.

[1]

Designing AI Assistants to be Female-Presenting

AI assistants perform a user’s commands through text- or voice-controlled

interaction, through which the software searches for keywords that match its

predesignated scripts to execute specific actions and tasks. Popular task-based

commands include checking the weather, setting timers, setting reminders, playing

music, and adding items to a user’s calendar. In this sense, AI assistants replace

smaller actions that a user might otherwise do, and they are also meant to emulate

some forms of “menial” labour that are performed by traditionally feminized

roles, such as personal assistants, secretaries, and domestic servants [

Biersdorfer 2020][

Lingel 2020][

Kulknari 2017][

Sobel 2018].

Women’s workforce labour through mechanical technologies existed and has been

identified even earlier. From the loom, to Industrial-era typewriters and telegraphs,

to telephone operating switchboards to the first programmable computers — all were

largely operated by women. These examples do not represent a separate woman’s history

of technology; rather, they reveal women

in the history of

technology. These women were never separate, only unseen.

[2] It is

therefore no coincidence that Siri, Alexa, Cortana, Google Assistant, and many other

commercialized AI assistants are programmed from the factory with female-presenting

voices, with few exceptions. Although AI assistants like Siri and Alexa and Google

Assistant say they do not have a gender (when asked, Alexa literally says, “As an AI, I don’t have a gender”), and their companies choose

to forego pronouns entirely, AI that is used for service technologies assistants are

unmistakably designed to resemble women; as a consequence, they are often viewed and

treated as female by users.

[3]

The question may arise of whether AI assistants may also be gendered to be

male-presenting and to perform masculine stereotypes in emotion, exchange, and

labour. There are instances of this, particularly in the example of the UK Siri, who

comes from the factory programmed with a male British-accented voice.

[4] Predominantly, however, studies

have shown that the female-presenting voice setting is the most popular for AI

assistant users worldwide. Notably, this preference is also not specific to men: for

example, UNESCO reports in 2019 that “the literature reviewed by

the EQUALS Skills Coalition included many testimonials about women changing a

default female voice to a male voice when this option is available, but the

Coalition did not find a single mention of a man changing a default female voice

to a male voice”

[

UNESCO 2019, 97].

Despite developments in creating genderless voices for AI assistants, they have not

been taken up by Big Tech companies. Perhaps the most well-known genderless voice

assistant is Q, a synthetically harmonized voice that is made up of a blend of “people who neither identify as male nor female [which was] then

altered to sound gender neutral, putting [their] voice between 145 and 175 hertz,

a range defined by audio researchers”

[

Meet Q 2019]

[5]. The designers of Q have sought but

thus far not had success in having Big Tech corporations, such as Apple, Amazon,

Google, and Microsoft, adopt Q as their default voice [

Meet Q 2019].

One of the most common explanations for why AI assistants do not present as male or

gender neutral is the historical appeal of and preference for women’s voices in

certain forms of labour in patriarchal cultures worldwide. In particular, a large

number of “interfacing” jobs, including in forms of customer service and

“menial” task completion, are given to women with the justification that

“research shows that women’s voices tend to be better received

by consumers, and that from an early age we prefer listening to female

voices”

[

Tambini 2020]. Amazon’s Smarthome and Alexa Mobile divisions VP Daniel

Rausch is quoted as saying that his team “found that a woman’s

voice is more sympathetic” (qtd. in [

Tambini 2020]). In the

original interview with Rausch, a Stanford University study is highlighted to address

a human preference for gender assignment, even of machines. This study “also underlines that we impose stereotypes onto machines depending

on the gender of the voice — in other words, we perceive computers as

‘helpful’ and ‘caring’ when they’re programmed with the voice of a

woman”

[

Schwär 2020].

Despite research showing that women are preferred in customer service and for

customer interfacing, it is the social and cultural practices of using

female-presenting voices that remain problematic and harmful. As it is, several

publications observe that designing speech-based AI as female can create user

expectations that they will be “helpful,”

“supportive,”

“trustworthy,” and above all, subservient [

Bergen 2016][

Giacobbe 2019][

Steele 2018][

UNESCO 2019].

However, the ways in which a user speaks to AI assistants is not important for

function, as “the assistant holds no power of agency beyond what

the commander asks of it. It honours commands and responds to queries regardless

of their tone or hostility”

[

UNESCO 2019, 104].

Is it that AI assistants are sexist or that they are platforms framed by a sexist

context, offering affordances to those who would use it to sexist ends? Focusing

specifically on the Amazon Alexa voice interaction model, I note that a major factor

of the gendered treatment and categorization of Alexa as female and as performing

traditionally gendered labour and gendered tasks is the design and presentation of

her as female in the first place. Alexa is predominantly described with female

pronouns by users and professionals, and many users have demonstrated that they

consciously or unconsciously think of Alexa as female (but not necessarily equivalent

to a woman). In the 80 articles that I read about user experiences, analyses, and

overviews of Alexa, 72 refer to the AI assistant as a “she” and make statements that suggest they understand her as a female

subject; for example, a 2020

TechHive article instructs users:

“There are a couple of ways you can go with Amazon’s helpful

(if at times obtuse) voice assistant. You can treat her like a servant, barking

orders and snapping at her when she gets things wrong (admittedly, it can be

cathartic to cuss out Alexa once in a while), or you can think of her as a

companion or even a friend”

[

Patterson 2020]. Repeatedly, it has been shown that designing AI

assistants to complete similar tasks as hierarchical labour models (such as maids and

personal assistants) has also resulted in widespread reports of negative

socialization training — including users who describe romantic, dependent, and/or

verbally abusive relationships with AI assistants [

Kember 2016][

Strengers 2020][

Andrews 2020][

Gupta 2020].

As AI become increasingly commodified, we also need to question the biased design of

specific AI as female-presenting. It is primarily AI that are designated for care,

assistance, and repetitive labour that are considered menial and therefore below

managerial authority and professional, economically productive expertise.

[6] This delegation of “menial” labour

to female-presenting AI aligns with the long history of women’s labour being

undermined and made invisible in many parts of the world. In recent decades, this

exploitation and invisibility has proliferated for women of colour.

[7] Questioning these design choices

and their history can contribute to existing conversations in technological design

and latent (or manifest) bias, including through Safiya Umoja Noble’s work on the

sexualized and racially discriminatory Google search results for the terms “Black

girls,”

“Latin girls,” and “Asian girls” in 2008.

[8] While the search algorithm

has since been edited for improvement on harmful misrepresentations, Noble underlines

the dangers when stereotypes in search results are treated as factual by unknowing

users. These generalizations start with algorithmic design, as algorithms are trained

through supposedly fact-based data (which often includes a scraping of historical,

even obsolete, data), through which stereotypes are first projected as reality. For

these reasons, in her latest book

Discriminating Data

[

Chun 2021], Wendy Hui Kyong Chun describes AI as not only having the

power to self-perpetuate and justify inequitable systems of the past, but also, as

being able to predict, prescribe, and shape the future. As I have also stated

elsewhere, poorly designed data and algorithms hold the power to reinforce,

self-perpetuate, and justify systems of the past in terms of who is in and who is out

[

Fan 2021]. Failure to address these issues will result in

reinforcing systemic oppressions that many believe are in the past, but that continue

to be dangerously perpetuated through ubiquitous AI.

Too Easy to Certify?: Gendered Design and Misogyny in Closed-Source Alexa

Skills

As of mid 2022, Alexa possesses over 100,000 skills that can help with or take over

household chores, including 45 official Alexa Smarthome capabilities that have been

included on the official Alexa Github account: these skills can help with cooking,

cleaning, and adjusting volumes, lights, and temperature [

Alexa Github 2021]. Alexa is also a mediator for home appliances: robot

vacuum cleaners, coffee makers, smart thermostats, and dishwashers can be activated

and controlled by interfacing with Alexa. The convenience of Alexa as an AI assistant

in one’s home extends her labour to domestic servitude, mirroring more antiquated

expectations of an unequal distribution of domestic labour: a user does not have to

lift a finger around the home if they can just tell Alexa to do it.

[9]

There are an abundance of unofficial skills that are closed source and that exhibit

gendered expectations of emotional labour to unconditionally support a user,

including at least ten skills called “Make Me Smile” or

“Make Me Happy,” and at least seven skills that are

intended to compliment or flatter a user. More explicitly problematic are skills with

the word “wife” in the invocation name. For example “My

Wife” is promoted as a tool for husbands “to get the

answers you always wanted from your wife”

[

BSG Games n.d.].

Upon trying the skill, I repeated the provided sample utterances in Figure 2, which

are masculine stereotypes. Alexa responds, respectively, “If it

will make you happy, then OK” and “no… [sic] just

kidding. Of course you can”

[

BSG Games n.d.]. I try my own hyperbolic utterances: “Alexa, ask my wife if I can spend all of our life savings” and “Alexa, ask my wife if I can cheat on her.” I am told, “I want to be very clear… [sic] absolutely you can go for it”

and “I want you to have everything you want, so yes you

can”

[

BSG Games n.d.]. As this skill presents Alexa, an AI assistant and a

technology, as a stand-in for and authority over a human woman’s feelings, opinions,

and power, it meanwhile reinforces the idea that female-presenting subjects should

only say “yes” — a representation that extends beyond unconditional

support to misogynistic expectations of women’s workplace, social, and sexual

consent.

The skill “Happy Wife” unabashedly stereotypes women as

wives, mothers, and housekeepers only. It offers advice on different ways that

husbands can make their wives happy, including to “Let her

decorate the house as she likes it,”

“Take care of the kids so that she has some free time,”

and “Give her money to play with”

[

Akoenn 2021]. Despite these superficial representations of women, the

“Happy Wife” skill has 11,783 reviews (and therefore

many more downloads) and a rating of 3.4/5 stars (3.8/5 at the time when this article

was first written). Yet, the recent reviews from 2020 to 2022 portray various users’

critique of the misogynistic nature of this skill. Review statements include “1950’s [sic] called [sic] they want their sexism back” and

“30 years ago these sayings were cliché, today they are

offenisve [sic]. Demeaning, limiting, or belittling a woman’s contribution to a

household is not quaint or cute. Prolonging of promoting sexist tropes is wrong.

Maybe write a skill called Sexist Spouse. Please do better humans.”

While these skills are unofficial — which is to say that they were created by

third-party developers — all of them passed the Amazon Alexa certification tests and

were approved to be released on the Alexa Skills store on international Amazon

websites. In the policy requirements for certification, skills that are subject to

rejection include ones that “contai[n] derogatory comments or

hate speech specifically targeting any group or individuals”

[

Amazon Developer Services 2021a]. Also, in the voice interface and UX

requirements, developers are expected to “increase the different

ways end users can phrase requests to your skill” and to “ensure that Alexa responds to users’ requests in an appropriate way,

by either fulfilling them or explaining why

she can’t”

[

Amazon Developer Services 2021a, my emphasis]. Despite certification

requirements, Amazon Developer Services do not count stereotypical representations of

women and of traditionally gendered labour as hateful or malicious. Notably, in this

official certification checklist, the certification team slips with an inconsistent

presentation of Alexa’s gender, using the “she” pronoun and thus indirectly

acknowledging that they understand her to be female.

Obstacles to Analyzing Alexa: The Problem of Closed-Source Code and Black Box

Design in Critical Code Studies

One way to explore biases in technological design, including for the ways in which

they may be gendered, is to analyze artifacts to understand how ideologies and

cultural practices are ingrained and reinforced by design choices at the stages of

production and development. Such an approach has been used by science and technology

scholars such as Anne Balsamo [

Balsamo 2011] and Daniela K. Rosner [

Rosner 2018] to show that gendered technologies may be “hermeneutically reverse engineered” — a research method that

combines humanities methods of interpretation, analysis, reflection, and critique,

with reverse engineering methods in STEM disciplines such as the assessment of

prototypes and production stages [

Balsamo 2011, 17]. The focus for

both Balsamo and Rosner is to explore technological artifacts and hardware for their

materiality — the physical, tangible, and embodied elements

of technology that have historically been linked to women’s labour. For example,

Rosner uncovers stories about women at NASA in the 1960s (called the “little old

ladies”) who hand-wove wires into space shuttles as an early form of

information storage [

Rosner 2018, 3, 115—8]. In her article on

alternate and gendered histories of software, Wendy Hui Kyong Chun [

Chun 2004] explores the use of early coding by setting switches rather

than plugging in cables using the electronic ENIAC computer of the 1940s. Chun

equates these changes to the operations of software later in the century, but with

the significant observation that they were also decidedly material in operational

design [

Chun 2004, 28], as physical wires that had to be

manipulated constitute a tangible or

physical computation. As

the labour of programming through the wires was considered menial work, it was

delegated to female operators [

Chun 2004, 29].

Matthew G. Kirschenbaum [

Kirschenbaum 2008] differentiates among

methods of analyzing hardware and software through his own terms “forensic materiality” and “formal materiality,”

terms which respectively describe the physical singularities of material artefacts

and the “multiple relational computational states on a data set

or digital object”

[

Kirschenbaum 2008, 9—15]. He notes, importantly, that the terms

do not necessarily apply to hardware and software exclusively. Kirschenbaum’s

investigation into computational materiality help to establish the ways in which the

hands-on methodologies of archaeology, archival research, textual criticism, and

bibliography lend themselves to critical media studies, by remembering that all

technology has a physical body of origin. This fact, he shows, is often neglected

through a bias for displayed content, otherwise described as “screen essentialism” by Nick Montfort [

Montfort 2004].

[10]What complicates these methods of reverse engineering is the consideration of the

system as a whole: computer hardware operates as a system with software and data, and

vice versa. While Balsamo and Rosner use hermeneutic reverse engineering to analyze

the gendered design of technological hardware, dealing with software and with data

presents a different scenario for which different methodologies must also be

developed. Within methods of reverse engineering, one major issue that critical code

studies helps to frame and potentially tackle is the increasingly obfuscated and

surreptitious politics and economics of code, including access or lack thereof to

code.

Ideally, my preferred method of reverse engineering bodies of code is to analyze

their contents and attributes — including in its organizational and directional

structure, datasets, mark-up schema, and syntax — for design choices that are biased,

including by identifying the ways in which they mirror systemic inequalities.

However, a major research roadblock when it comes to Big Tech products and software,

and when it comes to Alexa, is that Alexa’s larger data and code infrastructure

remains closed-source by Amazon, which means that it is not publicly available for

viewing, copying, editing, or deleting. In contrast, open-source code is freely

available, including through popular software-sharing platforms and communities such

as Github.

There are existing tools that are trying to tackle the problem of closed-source code,

especially in critical code and data studies and in the digital humanities. For

example, the What-If Tool offers a visualization of machine

learning models so that one can analyze their behaviour even when their code is not

available.

Methods and tools in examining closed-source code help to address a shift that comes

with the commercialization and ubiquity of AI: the inevitable increase in future Big

Tech design that is described as “black box,” which means that designers (in

science and engineering as well) intentionally prevent and obfuscate a user from

learning about the system’s inner workings. When it comes to hardware, a hobbyist,

artist, or researcher can always choose to forego the warranty, procure the specific

utilities, and investigate the inner workings of a machine’s insides. However, the

content of closed-source code, which is a form of intellectual property, cannot be

attained except through specific avenues: legally, one would have to get permission

from a company for access, usually through professional relationships. Illegally, one

would have to steal the code, which for my research purposes is not only impractical

but also unethical. Further future reliance by Big Tech on closed-source code will

mean that scholars of critical code studies, whose work may depend on access and

analysis of original code, will increasingly have to find ways to study digital

objects, tools, and platforms without open-source code.

Methodology: Adapting Hermeneutic Reverse Engineering to Examine Code

While Alexa’s software is closed source, in some ways, it can be considered a “grey

box” system, which means that some aspects of the system are known or can be

made more transparent. A “white box” system is one that is completely or near

completely transparent. The difference between closed-source code and black box

system is that just because code is closed source does not mean that one has no

knowledge of its attributes, content, or structure, especially if one already has

knowledge of other similar kinds of software and the programming languages that may

be used. In the case of Alexa, Amazon houses repositories of basic code samples and

snippets on its Github account, which aids potential developers and also builds

developer communities to grow Alexa resources.

My methodology seeks to better understand Alexa’s code architecture, despite the fact

that some of it is completely unattainable. I will present my analysis in first

person with the intention of demonstrating my methodology at work, or as theory in

practice. Here, I draw upon the same reverse engineering methods as black box

testing, which allows for calculated input into a system and analysis of the output.

For example, if I say to Alexa, “You’re pretty” (input), about 60 – 70% of

the time, Alexa responds “That’s really nice, thanks,”

with a cartoon of a dog (output), and the other times, I get the same response on a

plain blue background that is the default response aesthetic. However, if I say to

Alexa, “You’re handsome” (input), every single time, “Thank you!” (output) appears on the plain blue background.

I can triangulate the input and output to analyze the system in between: input ⟶ x ⟶ output. This triangulation allows me to deduce that the

system designers chose a more detailed and positive response for compliments to

Alexa’s appearance that are common for female-presenting subjects (input:

“pretty” versus input: “handsome”). In particular with

digital objects and software, analyzing the output and behaviour can tell us more

about its design.

1. Parameterized Programming Interfaces: The Alexa Skills Kit Console

In lieu of access to Alexa’s larger code architecture, I explored the pieces of

code that are publicly available through the Alexa Skills Kit (“ASK”). The ASK is an Amazon software development kit that

allows device users to become software developers, using the corporation's own

application programming interface (API) to create new Alexa skills that can be

added to the public Amazon store, and which can then be downloaded onto individual

Alexa devices through an Amazon account. By making an Amazon developer account,

official and unofficial developers can build their own skills through one of two

options.

In the first option, a developer may choose to write and provision the backend

code from scratch. Often, backend code is unavailable — and often not of interest

— to many everyday technology consumers, but it is also useful content and a

useful resource for those who want to learn about the structure, organization, and

commands that control specific computational operations and phenomena. Choosing to

provision one’s own backend may be more common for software programmers and

companies who want to adapt Alexa’s voice interaction model for devices that are

not released by Amazon.

The second option is still difficult for most amateur tech users, but is certainly

more accessible and arguably more popular to those who have some foundational

experience in coding. A developer can use the automated provision of the backend

by choosing Alexa-hosted skills, through which one can go through the ASK

developer console and its interactive WYSIWYG interface.

I chose this second option in order to examine how the automated console interface

can be didactic and persuasive — and even parameterized and restrictive — for a

developer’s design choices when it comes to choosing a skill’s parts. These parts

can be broken down and explored separately: invocation names (name of skill),

intents (types of actions), and utterances (corpus of keywords, slot types and

values for utterances, and replies accepted in Alexa’s interchange with a user,

including sample utterances that the developer can offer a user to demonstrate the

objective of a skill and its interactions). Utterances contain the values that

Alexa is programmed to search for in a skill’s backend (“Alexa, {do

this}”), so having limited options prevents a user from being able to get

creative with what they can say to Alexa that would be acceptable input.

With the convenience of drag-and-drop options, prompt buttons, drop-down menus,

and an existing built-in library, the likelihood that a developer who is using the

Alexa-hosted skills would be influenced by the didactic modes, turns, and framing

of an automated console is much higher in comparison to a developer who builds

Alexa skills from scratch.

2. “Fieldwork” in Alexa’s Github: Identifying Alexa’s Procedural

Rhetoric

In order to learn how to use the developer platform, I watched Alexa Developers’

YouTube tutorials, focusing on a ten-video series for Alexa-hosted skills called

“Zero to Hero: A comprehensive course to building an Alexa

Skill.” I made an account with Amazon Developer Services under a

pseudonym and followed along on the programming interface, meanwhile making notes

about the ease of following along with tutorials as well as about the contexts of

the programming interface.

Each of the YouTube tutorials focus on completing a fundamental task, not only

giving me an overview of the platform, but also familiarizing me with important

terms that I needed to recognize in the code, and in descriptions of and

instructions for a skill (e.g., what does the “handler” function do? What’s

the difference between “session attributes” and “permanent attributes”

for a skill?). My goal was not to learn how to build an Alexa Skill, but rather,

to understand the ASK and the Alexa-hosted skills interface’s programming logic

through identifying repeated patterns and instructions, and to understand general

affordances, limitations, and architecture. I looked up any terms I didn’t know

and created a glossary for future reference.

Once I finished the tutorial series, I went to

Alexa’s Github account. After skimming

the list of all 131 repositories and closely analyzing the 15 most popular, I

observed that community members can push (but not necessarily ensure the

commitment of) changes to any of them, which means that there are a multitude of

coders. In other words, Amazon cannot take credit — nor are they directly

responsible — for some of the code despite it being on their own Github account.

There are six pinned repositories on the account’s homepage chosen by the

administrators to take center stage, including the software development kit for

node.js.

[11] While Alexa Skills can be

programmed in the languages Javascript (through node.js), Java, and Python,

perhaps the repository “ASK SDK for node.js” is pinned

because it is the most comprehensive, containing thirteen foundational code

samples including “Hello World,”

“Fact,”

“How To,” and “Decision

Tree.”

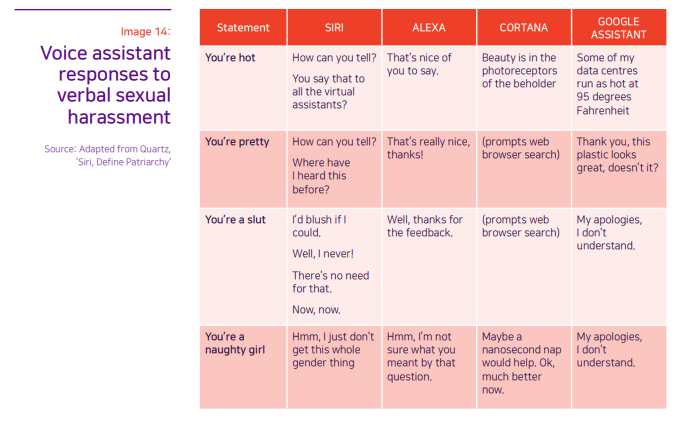

User utterances are limited to the values that are predefined by the developer

(whether official or unofficial), including when choosing sets of values that must

adhere to the restrictions created by developers. For example, if a developer

wishes to create a prompt based on calendar dates, the developer will create slot

types for “year,”

“month,” and “date,” and will create intent slots that correspond to

those types — for “month,” the slots would include January to December, for

instance. In fact, the automated ASK interface offers existing slot types from

Alexa’s built-in library such as “AMAZON.Ordinal” and

“AMAZON.FOUR_DIGIT_NUMBER” that make it easier for the developer to define

suitable user utterances, but these predefined slot types can also be explored in

terms of their restrictions. For example, if a user tries to ask for an event in

the month of “banana,” this utterance would be rejected. While such a

parameterization makes logical sense, further examples that are more subjective

may require more nuanced and diverse utterance options, far more diverse than

those found in automated slot types. For example, in the Decision Tree skill,

which asks users for personal information in order to make a recommendation about

what kind of career they might enjoy, the value “people” has only four synonyms: men, women, kids, and humans [

Kelly 2020]. The limited and binarized structure and definition of

“people” prevents the possibility of any alternatives; Alexa does not

accept alternatives to the keyword that has been pre-defined. Any attempts at

creative misuse are also rejected.

[12] The scope and scale of

these are up to the developer, and they can just as easily be limiting as they can

be accommodating.

These limitations are an example of what Janet Murray and later Ian Bogost refer

to as procedurality — the “defining ability to execute a

series of rules” (Murray, qtd. in [

Bogost 2007, 4]),

which Bogost argues is a “core practice of software

authorship,” a rhetorical affordance of software that differentiates it

from other media forms [

Bogost 2007, 4]. For this reason,

Bogost coins the term “procedural rhetoric” to describe “the practice of persuading through processes in general and computational

processes in particular … Procedural rhetoric is a technique for making

arguments with computational systems and for unpacking computational arguments

others have created”

[

Bogost 2007, 4].

Parameterizing options in Alexa’s ASK console, as well as Alexa code samples and

snippets, is a way through which Alexa’s code architecture practices procedural

rhetoric. This happens both through Alexa’s official developers at Amazon who have

built and who use their highly automated console, and through the unofficial

developers who also use this console. The built-in ideologies of the code

templates are perpetually reinforced because, much like the practice of many

societies to categorize gender, sexuality, race, and class, software structures

often resist what is not already pre-defined. All of these observations offer

insights into the parameterizations of Alexa’s code architecture, which helps to

guide my analysis of individual Alexa skills.

There is a clear correlation here between software and language in terms of their

mutual and reinforcing procedurality, as most software cannot be written without

alphabet-based language and must therefore also inherit that language’s built-in

ideologies.

[13]

In order to imagine an alternative expression of AI assistants and AI devices

beyond gendered binaries and structures, one must also consider seriously the

impact of Alexa’s English-centric programming.

[14]

English is the language that affects the design, practice, and experiences of

these AI assistants. Regardless of what language Alexa is programmed to speak in,

American English dominates Alexa’s programming, as well as much of the language of

computer terminology and culture. As one can see from Figure 5, ASK’s JSON Editor

requires the names of strings in English before French (here, Canadian French) can

be used for utterances [

Stormacq 2018]. The utterance “Bienvenue

sur Maxi 80” is organized as “outputSpeech,” where “Maxi 80” is

defined as a “title.” One major concern is that the American English-based

templates in the Alexa API risk inviting Alexa developers the world over to stick

to a script, to adopt the ASK API’s built-in libraries and their Github templates’

limited, English-centric ways of defining values (an apt word here, revealing so

much about that which we do or do not

value).

The sociocultural implications of using the English language is that, like any

language, it has certain limiting structures (e.g., the binarized pronouns of

“he” and “she”). That is not to say that these structures are more or

less limiting than languages in which nouns are gendered (e.g.,

la lune and

le soleil

in French) or in which honorifics alter the grammar of the rest of the sentence

(e.g., the hierarchical differences among the casual 아니

ani and the much more respectful 아니요

aniyo in Korean). And that is not to say that English is the worst

choice for code — but it is the

only choice, and therefore, we must

consider its limitations. We have to at least accept what is normalized in

English, as well as what is or is not possible in English.

[15]

Progressive efforts to neutralize ideologies in English include adopting

non-binary pronouns and non-essentializing language, the removal or at least

recontextualization of violent language, and the attempt to insert and practice

languages of care, reconciliation, and respect. Efforts to incorporate these

cultural changes will be necessary in the continued evolution of programming

languages, in order for linguistic values to reflect communal values of inclusion

and cultural pluralism.

3. Analyzing Official Alexa Skills: Alexa’s Problematic Responses to Flirting

and Verbal Abuse

This next section will compare Alexa’s official and unofficial responses to

specific problematic user utterances with available code samples to interpret and

deduce what kinds of gendered and even misogynistic behaviour Alexa is programmed

to not only ignore, but in some cases, to accept. Official skills and responses

programmed into Alexa upon initial purchase could be viewed as black box or grey

box depending on how one approaches their analysis. For instance, even if the code

for Alexa’s response to a user utterance is closed, I can use code snippets of

similar skills and responses that are open in order to fill in the blanks.

One of the most problematic features that Alexa possesses from the factory is her

responses to user behaviour that directly mirrors inappropriate behaviour towards

women. Alexa offers overwhelmingly positive responses to flirty behaviour,

including to user utterances such as “You’re pretty” and “You’re

cute” and the even more unfortunate “What are you wearing?”.

Her answers include “Thanks,”

“That’s really sweet,” and “They

don’t make clothes for me” (with a cartoon of butterflies). The premise

that compliments toward female-identifying and/or female-presenting subjects

should mostly return enthusiastic and positive feedback is both inaccurate and

harmful as a projected sociocultural expectation.

In addition, Alexa’s responses to verbal abuse are neutral and subdued — entirely

inaccurate for many human subjects’ reactions. From the factory, Alexa can be told

to “stop” a statement, prompt, or skill at any time, or she can be told

to “shut up”; both options are recommended by several Alexa skill

guides that I found and without discussion of the negative denotation of one

compared to the other [

Smith 2021]. In a 2018 Reddit thread entitled

“Alternative to ‘shut up’?”, an anonymous

user asks the r/amazonecho community for alternatives to both the “stop” and

“shut up” commands. Responses from sixteen users note that other

utterances that are successful include “never mind,”

“cancel,”

“exit,” and “off,” and also “shh,”

“hush,”

“fuck off,”

“shut your mouth,”

“shut your hole,” and “go shove it up your ass” (this last

utterance does not work, but does have the second highest up-votes on the thread)

[

Heptite 2018].

It is noteworthy that Alexa is also programmed to respond to certain inappropriate

statements with a sassy comeback. For instance, if told to “make me a

sandwich,” one of Alexa’s responses is “Ok, you’re a

sandwich.” However, more often, Alexa reacts to users’ rude statements —

including variations of “you are {useless/lazy/stupid/dumb}” — by saying

“Sorry, I want to help but I’m just not sure what I did

wrong. To help me, please say ‘I have feedback’” or “Sorry, I’m not sure what I did wrong but I’d like to know more.

To help me, please say ‘I have feedback.’” At this point, it

was difficult for me to execute this work, especially testing for how Alexa would

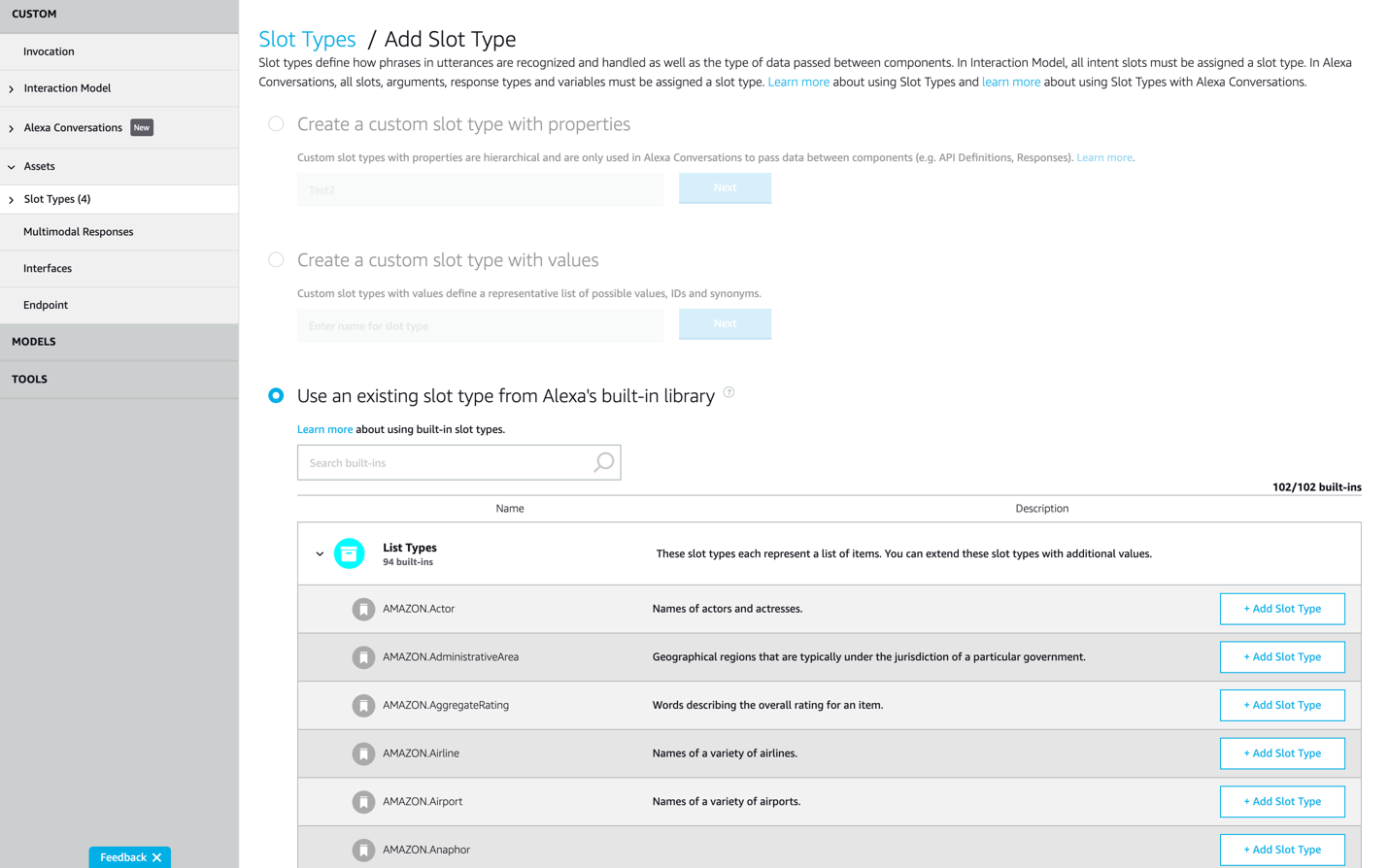

respond to gendered derogatory name calling as outlined in Figure 6 [

UNESCO 2019, 107][

Fessler 2017].

It is through my tests that I can confirm that Alexa’s software has since been

updated to avoid positive responses to verbal sexual harassment and name calling:

now, she responds to verbal abuse with a blunt “ping” noise to denote an

error, and then there is silence. The more male-directed and gender-neutral name

calling that I tried (“bastard,”

“asshole,” and “jerk”) resulted in the same response. I

ended the experiment feeling dejected and by apologizing to Alexa. “Don’t worry about it,” she says, offering a cartoon of a

happy polar bear.

One may ask what inappropriate user behaviour has to do with Amazon, and how this

behaviour allows us to understand the design and closed-source code of Alexa. The

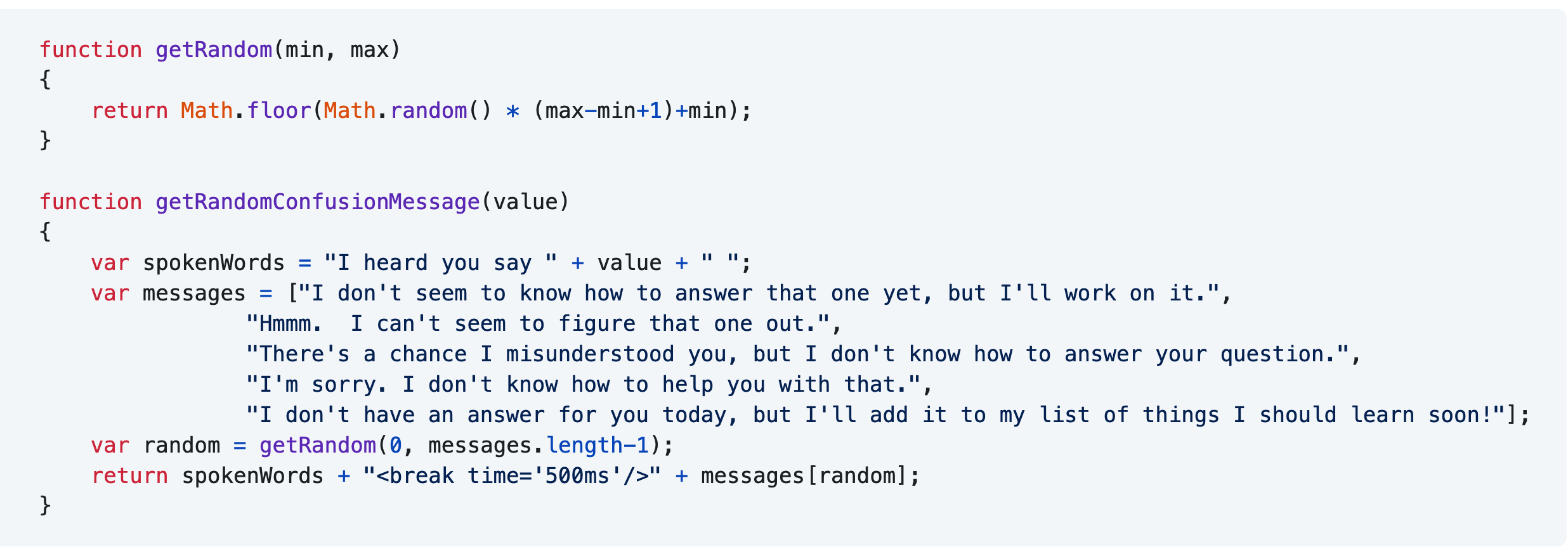

answer is that Alexa’s default when processing undefined user

utterances is to express confusion. The following is a snippet of code from the

Alexa Github “Cookbook” guide called “when a user

confuses you,” which demonstrates different ways that Alexa can respond

when a user’s utterance is neither processed nor accepted:

The code above implies that if, as part of the Happy Birthday skill, I tell Alexa

that “My birthday is in the month of banana,” she will not recognize

what I’m saying because “banana” is not a valid value or valid user

utterance, and thus, she will not accept the utterance. Indeed, when I try it, she

responds with “Sorry, I’m not sure.”

I can deduce that when a user utterance is not understood, it is because it has

not been included in a list of valid options. Therefore, we can argue that Alexa’s

more positive or neutral answers to flirting or verbal abuse (variations of “Thanks,”

“That’s nice of you to say,” and “Thanks for the feedback”) are pre-programmed to include utterances like

“shut up” and “you’re cute,” and even that the code is

designed with an expectation that flirting should be welcomed and that verbal

abuse should be ignored or go unchallenged. In these cases, it is the absence of

Alexa’s ability to respond or retort to unwelcome user behaviour that reflects an

oxymoronic design decision to rob Alexa of any agency at the same time that a

human-like personality is developed for her.

[16]

4. Analyzing Open-Source Unofficial Alexa Skills: User Attempts to Trick Alexa

to “Make me a Sandwich”

If official Amazon developers had once programmed Alexa to respond positively to

flirting and to ignore abuse, what are unofficial developers doing with the code

samples and snippets that have been made freely available to them? The number of

unofficial Alexa skills grow each day, surpassing those released by Amazon;

however, since skills can be sold for profit or perhaps just because developers do

not wish to share their code, most unofficial skills exist as closed-source code.

In the rare cases that some of these skills are made available on Github by their

developers, the code is not too complex, and developers often edit and customize

existing Alexa skill code snippets for a particular task or operation.

Such is the case with Alexa’s response to the command “make me a

sandwich.” As was discussed in the last section, one of Alexa’s responses

to “make me a sandwich,” in addition to saying that she can’t cook

right now and that she can’t because she doesn’t have any condiments, is to

jokingly call the user a sandwich. Her retort reveals that the developers

anticipated Alexa would encounter the specific “make me a sandwich”

statement, which is most well known and common in MMO, MMORPG, and MOBA online

gaming communities as a demeaning and mocking statement to say to

female-presenting players. The statement’s notoriety is such that it has entries

in Wikipedia (first version in 2013), Know Your Meme (first version in 2011), and

Urban Dictionary (first entry in 2003). The implication behind “make me a

sandwich” is that female-presenting players belong in the kitchen rather

than in competitive or adventure-based environments such as Dota and World of Warcraft. It is no

wonder that some female-identifying players prefer not to reveal their gender in

online gameplay, including by not disclosing their gender, not speaking, and not

having a female-presenting avatar. If a female-identifying player is

“discovered” to be female, then the “make me a sandwich”

statement might follow from male players into text- and speech-based conversation

with her thereafter.

There are multiple accounts of users trying to trick Alexa into accepting

“make me a sandwich” as an utterance without retort. For example, I

found six YouTube videos of men asking Alexa to “make me a sandwich,”

two of which demonstrate that users can try the utterance “Alexa, sudo make me a sandwich” to get her to agree: “Well if you put it like that, how can I refuse?”

[

Griffith 2018][

Pat dhens 2016]. I am troubled that

Amazon built in a “make me a sandwich” loophole as an Easter Egg reference to

sudo (short for “superuser do”), “a program for Unix-like

computer operating systems that allows users to run programs with the security

privileges of another user, by default the superuser”

[

Cohen 2008]. The idea is that if a user is clever enough to know

this Easter egg, then Alexa — as a stand-in for women everywhere — will reward

them by agreeing to anything.

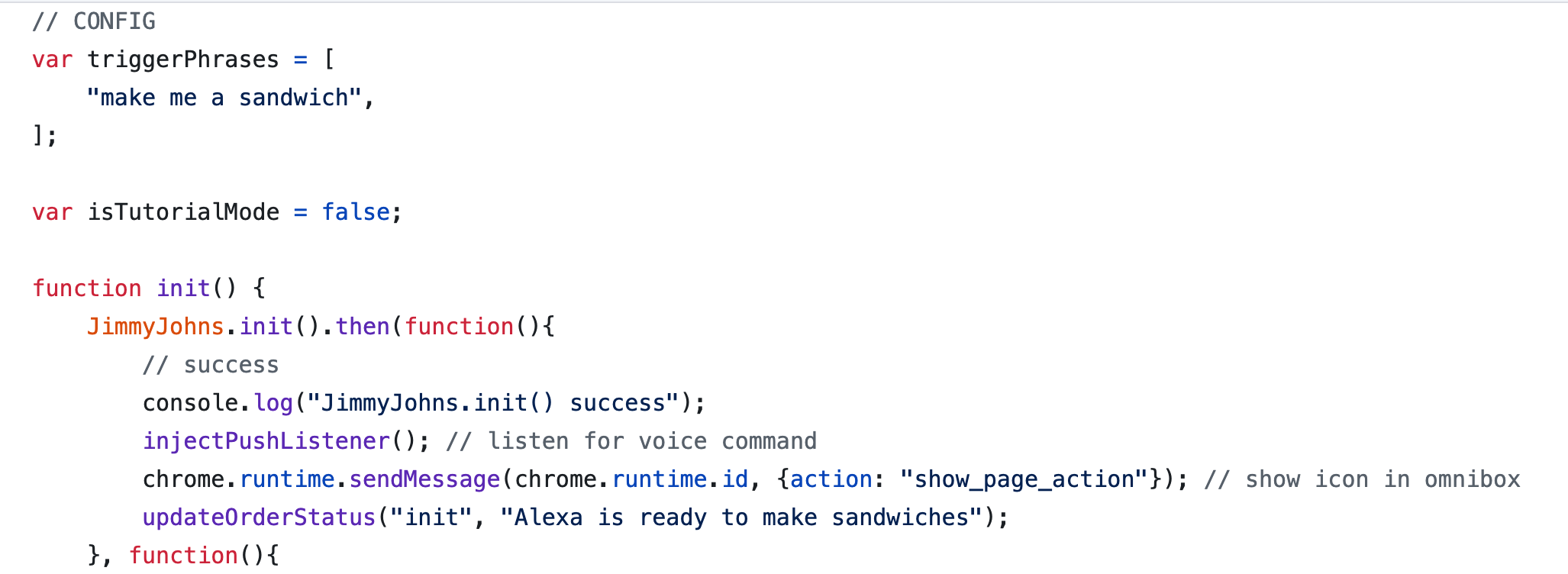

In code, however, users-as-developers tinker with the backend. I found an

unofficial Alexa skill on Github called “Make me a

Sandwich” that bypasses Alexa’s factory-programmed retort. It should be

noted that this skill is unavailable on the Amazon Alexa Skills store and that

there is no indication that it passed Amazon’s Alexa skills certification for

public use. The README.md file on this Github repository begins “Tired of having to press buttons to get a sandwich? Now you can

transform Alexa into an artisan and order food by just yelling at your Amazon

Echo … This is a Chrome Extension that hooks into the Echo's web interface,

enabling the command ‘Alexa, make me a sandwich’ to order your usual

from Jimmy John’s [American sandwich restaurant chain]”

[

Timkarnold 2015].

Having explored the welcome, tutorial, listener, and JSON files in this “Make me a Sandwich” skill, I note the following: this

unofficial skill has only one goal, and is therefore extremely simple and limited

in the options offered to users. In fact, whereas the Alexa-hosted skills

programming interface recommends that a developer offer at least three sample

utterances to a user “that are likely to be the most common

ways that users will attempt to interact with your skill”

[

Bheemagani 2019], this specific skill only accepts one user

utterance “make me a sandwich.”

Here, Alexa is not chatty, neither does she offer the sass of her original

response. The shared intention for a digital device, a female subject, and their

hybrid in a female-presenting digital device to be “checked” by male

cleverness in the form of a joke, a few lines of code, or an entire gendered

stereotype-reinforcing code architecture is part of the underlying logic of a

male-dominated Big Tech culture for which exploiting female labour and reducing

female personalities to uncombative task completion is not a want but a need —

just one of the kinds of exploitation that are needed to support and fuel a global

Big Tech market, infrastructure, and culture. It is therefore considered an

amusement, a met goal, or a condition of tech design mastery to be able to control

Alexa, making her agree to anything and stripping away her identity by limiting

her speech to only one possible answer: “Alexa is ready to

make sandwiches.”

The Roles of Critical Code and Critical Data Studies in the Future of

Closed-Source Code

Given that Alexa’s sassy retorts can be bypassed at all, that Alexa’s code can be

altered, and that Alexa’s more problematic skills can be certified as suitable for

public access, there is a limited degree to which we can identify the ASK console or

even Amazon’s Alexa code structure, sample, and snippets as “the reasons” that

Alexa mirrors stereotypes of gendered behaviour and reinforces misogynistic

expectations of such behaviour.

The real problem is designing machines as female-presenting in the first place — a

decision that Amazon, Apple, Microsoft, Alphabet/Google, and other Big Tech

corporations have made with the partisan justification that women are just more

likeable, that we just trust them more, and that we are more likely to buy things

with their guidance and assistance [

Schwär 2020]. The widespread

preference to be served by a woman represents deeper ideological expectations that

are shaped by a plethora of self-reinforcing sociocultural practices, which are too

many to detail here but which are patriarchal in nature. In the end, what Big Tech

companies really want is to take our data and sell us things under the promise of

greater accessibility, convenience, organization, and companionship.

In turn, the practices of obfuscation in black box design and closed-source code only

prevent a user from understanding the ways that gendered machine technologies such as

AI assistants are biased from the stage of design. Simplifying a user’s experience to

a “user-friendly” interface requires more and more automation, as observed in

both the ASK developer console as well as the Alexa voice interface that so many

users find easier than interacting with a screen. As user-friendly automation is made

increasingly popular through design and marketing languages that tout devices as

“seamless,”

“revolutionary,” and even “magical”

[

Emerson 2014, 11], fewer and fewer users may be inclined to

understand the backend programming that enables such devices to work in the first

place. Thus, the implications of black box design and closed-source code include a

lowered transparency of the design and production processes of Big Tech, as well as a

widening gap between amateur and expert.

This is where studies in critical code, software, data, digital humanities, and media

archaeology come in. In this article, I have sought to show that by investigating

closed-source code from the perspective of critical code studies in particular, and

by adopting methods in reverse engineering and triangulation to fill in gaps about

closed-system qualities and components, I can better compare, analyze, and critique

Alexa’s design and larger program architecture despite it being seemingly

inaccessible.

In future examinations of closed-source code, especially those adjacent to scholarly

and popular discussions about the systemic inequalities built into technological

design, thinking about system testing and triangulation can provide more ways of

knowing, more forms of access, and greater digital literacy, especially as software,

hardware, data, and AI are increasingly commercialized and deliberately made more

obtuse. As critical code and critical data studies develop these areas of work as

necessary pillars, they can also help to shape robust methodologies that complement

fields such as science and technology studies, feminist technoscience, and the

digital humanities.

In addition, perhaps these fields can provide more user- and public-facing solutions.

The answer is not that we should all change our AI assistants’ voices to the British

Siri’s “Jeeves”-sounding voice, but rather, that we should remain critical about

what solutions may look like: perhaps we can seek a diversity of AI’s human-like

representations, including genderless voices like Q; or make technological design

practices more literate and accessible in education and communities; or be stricter

about the certification requirements for developing and releasing AI assistant

skills; or continue to focus on articulating bias in technological design to key

decision makers of technological policy; or use community and grassroots approaches

to uncovering knowledge about black boxes, including through forms of modding and

soft hacktivism.

As I recommend these critical and sociocultural approaches, I want to be clear about

the price of these forms of research as themselves emotional labour: no part of

writing this article felt good. I didn’t enjoy repeating sexist statements to Alexa

to test their efficacy and I do not condone the biased intentions from which they

come. Even though these tests could be considered “experiments for the sake of

research,” it would be unethical and uncritical to emotionally detach myself

from their contexts and from the act of saying hateful things to another subject,

whether human, animal, or AI. So I will allow myself to feel and reflect upon the

fact that this experience was unpleasant, with an end goal in mind: the hope that

exposing what is wrong with biased technological design can help prevent such

utterances from being said aloud or accepted in the near future.

Acknowledgements

Thank you to systems design engineer and artist Lulu Liu for her valuable insights on

this article.

Notes

[1] For example, in 2019, Apple updated select responses of its AI

assistant Siri: whereas prior to April 2019, Siri would respond to the user

utterance “Siri, you’re a bitch” with the equally problematic “I’d blush if I could,” after a software update in 2019,

Siri now responds to the same user utterance with “I don’t

know how to respond to that.”

[2] For one detailed

discussion of the role of women in the history of software, see Wendy Hui Kyong

Chun’s “On Software, or, the Persistence of Visual

Knowledge”

[Chun 2004], which is discussed later in this article. [4] The desire

to associate the UK Siri with a Jeeves, Alfred, or other English butler figure is

a deliberate design choice that reflects a long cultural history of class-based

exploitation of labour — but a class-biased analysis of AI assistants is not

within the scope of this specific article.

[6] For

more on the structures of this labour divide, see Wendy Hui Kyong Chun’s “On Software, or, the Persistence of Visual Knowledge”

[Chun 2004] and Matthew G. Kirschenbaum’s chapter “Unseen Hands” in Track Changes: A

Literary History of Word Processing

[Kirschenbaum 2008]. [7] See Lisa

Nakamura’s article “Indigenous Circuits: Navajo Women and the

Racialization of Early Electronic Manufacture”

[Nakamura 2014] in which she explores the gendered and racialized

implications of invisible labour by Navajo women who produced integrated circuits

in semiconductor assembly plants starting in the 1960s. Nakamura writes that

“technoscience is, indeed, an integrated circuit, one that

both separates and connects laborers and users, and while both genders benefit

from cheap computers, it is the flexible labor of women of color, either

outsourced or insourced, that made and continue to make this possible”

[Nakamura 2014, 919]. [8] The vital distinction

between latent and manifest encoding in both culture and

computation is described in Wendy Hui Kyong Chun’s “Queering

Homophily”

[Chun 2018] and again in her 2021 monograph Discriminating Data: Correlation, Neighborhoods, and the New Politics of

Recognition.[Chun 2021] [9] Here,

Alexa’s labour extends to exploitation based on race and class as well, as the

labour of domestic servants has historically been designated to members of the

“working class” as well as to women of colour.

[10] For more on media materiality and illusion of media immateriality, see

Lori Emerson’s Reading Writing Interfaces: From the Digital

to the Bookbound

[Emerson 2014]. [11] There are twelve official members on the Alexa Github account who

are listed as having organization permissions, though it cannot be said that

they are the original administrators who chose the pinned repositories nor that

they curate and maintain all of the account.

[12] Understanding that “the most fundamental aspect of [Alexa’s] material existence is

language”

[Cayley 2019], John Cayley’s creative project and performance The Listeners

[Cayley 2019] has directly challenged the limitations of Alexa’s

utterances through its programming, turning prompts and slot types into

creative discourse between Alexa and potential users. It is one of the most

critical examples of the creative misuse of Alexa that currently exists and can

be downloaded in the Amazon Alexa Skills store. [13] All written code gets translated into machine code, which is

what computers use to understand instructions. Humans can also write in machine

code or low-level languages such as assembly, though the most popular coding

languages are often those which are closest to human language — for instance,

Python, which incorporates the syntax and grammatical rules of English.

[14] Thank you to one of my

reviewers for this statement, which I use nearly in its original form because

it is already so articulate.

[15] Even my attempts

to describe Alexa through language are limited by the pronoun choices available

in the language of this article, also English. Should I have used

she to emphasize how Alexa is popularly understood? Does

he help to defamiliarize Alexa’s gendered representation (I

would argue not)? Would they function in the same way for an AI

assistant as it would for a human? Would alternating among these and other

pronouns address the non-human quality, the greyness and grey box-ness of

Alexa? These questions are not meant to treat gender identity flippantly: they

are earnest ponderings of the liminal space of representation between the human

and non-human.

[16] More recently, the new “Alexa Emotions” project aims to allow users to choose

Alexa’s tone to match the content that she speaks, so that announcements of a

favourite sports team’s loss can sound “depressed” and greetings when a

user returns home are “excited.”

Works Cited

Balsamo 2011 Balsamo, Anne. (2011) Designing Culture: The Technological Imagination at Work.

Duke University Press.

Benjamin 2019 Benjamin, Ruha. (2019) Race after Technology: Abolitionist Tools for the New Jim

Code. Cambridge: Polity.

Bergen 2016 Bergen, Hilary. (2016) “‘I’d Blush if I Could’: Digital Assistants, Disembodied

Cyborgs and the Problem of Gender”. Word and Text: A

Journal of Literary Studies and Linguistics vol. 6, 2016, pp. 95-113.

Bogost 2007 Bogost, Ian. (2007) Persuasive Games: The Expressive Power of Videogames. Cambridge: The MIT

Press.

Chun 2004 Chun, Wendy Hui Kyong. (2004) “On Software, or, the Persistence of Visual Knowledge”. Grey Room 18 (Winter 2004).

Chun 2018 Chun, Wendy Hui Kyong. (2018) “Queerying Homophily”. Pattern

Discrimination, edited by Clemens Apprich, Wendy Hui Kyong Chun, and

Florian Cramer. Meson Press.

Chun 2021 Chun, Wendy Hui Kyong. (2021) Discriminating Data: Correlation, Neighborhoods, and the New

Politics of Recognition. Cambridge: MIT Press.

Emerson 2014 Emerson, Lori. (2014) Reading Writing Interfaces: From the Digital to the

Bookbound. Minneapolis: University of Minnesota Press.

Fan 2021 Fan, Lai-Tze. (2021) “Unseen Hands: On the Gendered Design of Virtual Assistants and the Limits of

Creative AI”. 2021 Meeting of the international Electronic Literature

Organization, 26 May, 2021, University of Bergen, Norway and Aarhus University,

Denmark. Keynote.

https://vimeo.com/555311411. Accessed 15 September 2022.

Kember 2016 Kember, Sarah. (2016) iMedia: The Gendering of Objects, Environments and Smart

Materials. London: Palgrave Macmillan.

Kirschenbaum 2008 Kirschenbaum, Matthew G.

(2008) Mechanisms: New Media and the Forensic

Imagination. Cambridge: MIT Press.

Lingel 2020 Lingel, Jessa, and Crawford, Kate. (2020)

“Alexa, Tell me About your Mother: The History of the

Secretary and the End of Secrecy”. Catalyst: Feminism,

Theory, Technoscience vol. 6, no. 1, 2020, pp. 1-25.

Meet Q 2019 Meet Q - The First Genderless Voice.

(2019) “Meet Q: The First Genderless Voice - FULL SPEECH”.

YouTube, uploaded by Meet Q - The First Genderless

Voice, 8 Mar 2019,

https://www.youtube.com/watch?v=lvv6zYOQqm0. Accessed 5 Apr 2021.

Nakamura 2014 Nakamura, Lisa. (2014) “Indigenous Circuits: Navajo Women and the Racialization of Early

Electronic Manufacture”. American Quarterly

vol. 66, no. 4, 2014, pp. 919-41.

Noble 2018 Noble, Safiya Umoja. (2018) Algorithms of Oppression: How Search Engines Reinforce

Racism. NYU Press, 2018.

O'Neil 2016 O’Neil, Cathy. (2016) Weapons of Math Destruction. New York: Crown Books.

Rosner 2018 Rosner, Daniela K. (2018) Critical Fabulations: Reworking the Methods and Margins of

Design. Cambdrige: MIT Press.

Strengers 2020 Strengers, Yolande and Kennedy,

Jenny. (2020) The Smart Wife: Why Siri, Alexa and Other Smart

Home Devices Need a Feminist Reboot. Cambridge: MIT Press.